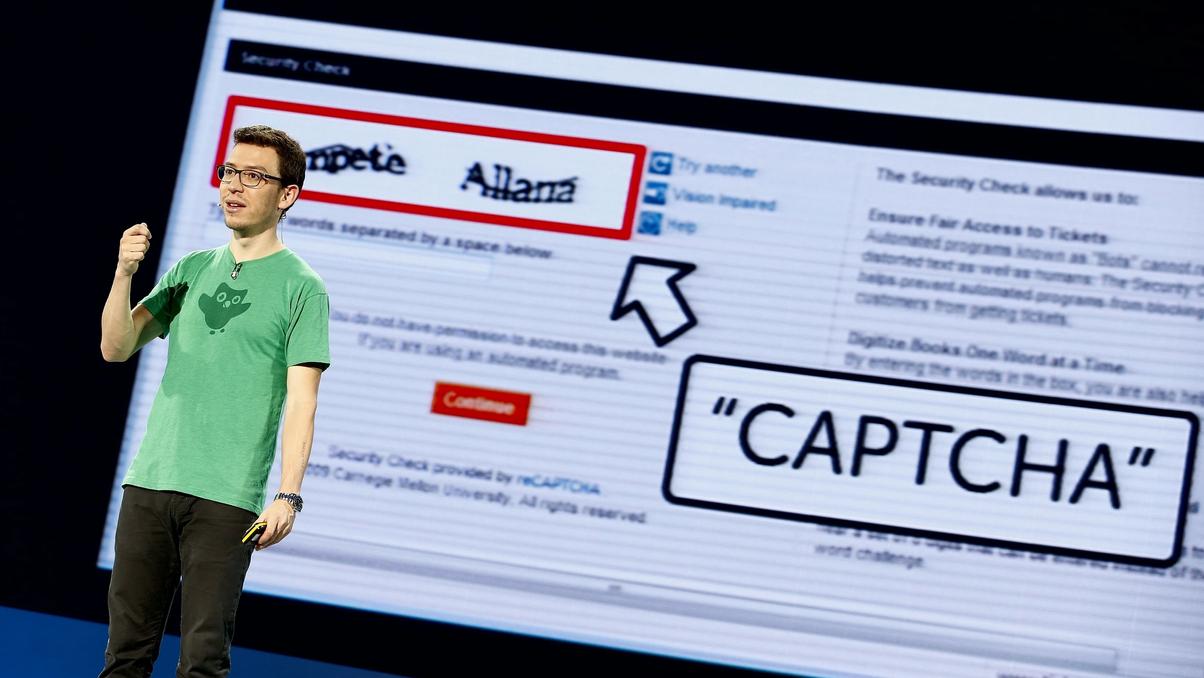

Get Ready To Struggle Even More To Prove You’re Not A Robot

If you thought CAPTCHAs were annoying already, just wait. Shutterstock

Shutterstock

News that is entertaining to read

Subscribe for free to get more stories like this directly to your inboxAs AI gets more advanced, it’s becoming harder for humans to prove that we’re, well, human. We’ve all gotten used to completing those little tasks before gaining access to certain online platforms, but the “not a robot” tests just aren’t cutting it anymore.

A brief CAPTCHA history

Let’s take a look at the primary system for rooting out bots. CAPTCHA — or “Completely Automated Public Turing test to tell Computers and Humans Apart” — has been around since the early internet to help weed out programs designed to overwhelm certain systems.

It started out as simple boxes to check or a string of slightly distorted letters and/or numbers to type out. From there, we started to get the array of boxes and a nerve-racking instruction to select only those containing an image of a specific item.

But as AI gets smarter, there’s now a need for even trickier rituals like rotating images in a very specific manner or completing other puzzles that AI can’t figure out —- yet.

Finding the right balance

There might be a point in the not-too-distant future at which CAPTCHAs are simply unable to identify bots at all. There’s really no way to predict what that might mean for our high-tech society, but in the meantime the folks who are tasked with creating the next batch of puzzles have to achieve an effective mix of three factors:

- Usability: Simple enough for humans

- Security: Too tough for bots

- Accuracy: The ability to tell the difference

But a 2020 study found that bots are way better than people at cracking many of the CAPTCHA codes and they’ll do it for a lot cheaper than human hackers. So without sacrificing some serious usability (aka making your life online more annoying), there’s probably no realistic way to achieve security and accuracy.

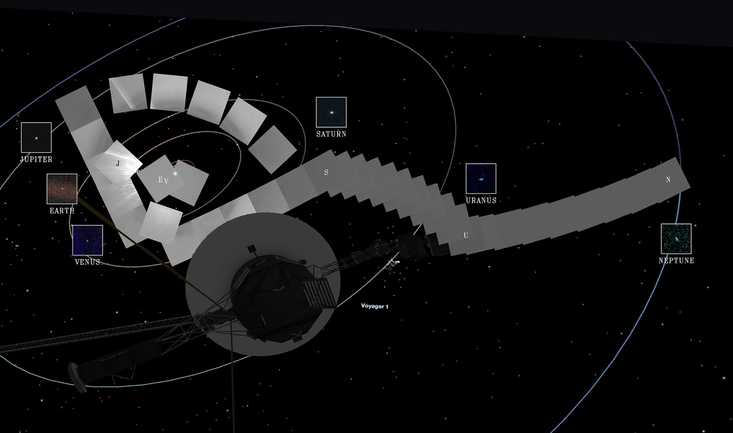

Why Is The Aging Voyager 1 Probe Sending Back Incoherent Communications?

It's been speaking gibberish for a few months and officials are concerned.

Why Is The Aging Voyager 1 Probe Sending Back Incoherent Communications?

It's been speaking gibberish for a few months and officials are concerned. One Woman’s Massive Donation Is Wiping Out Tuition At This Medical School

Her inheritance came with the instruction to do "whatever you think is right."

One Woman’s Massive Donation Is Wiping Out Tuition At This Medical School

Her inheritance came with the instruction to do "whatever you think is right." Woman’s Pets Will Inherit Her Multimillion-Dollar Fortune, Not Her Kids

It's not the first time four-legged heirs were named in a will.

Woman’s Pets Will Inherit Her Multimillion-Dollar Fortune, Not Her Kids

It's not the first time four-legged heirs were named in a will.